In this week’s News of Note, Amazon continues its competition with Starlink by launching another batch of internet satellites, WhatsApp receives a ban by congressional staffers and “the ChatGPT of quantum computing” launches in Canada. Elsewhere, Texas Instruments announces a major investment in semiconductor production in the United States.

Articles Posted in Social Media Policies

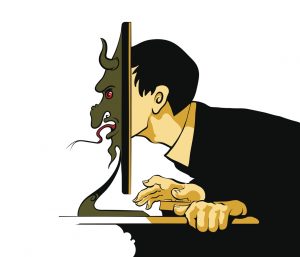

Everything in Moderation: Artificial Intelligence and Social Media Content Review

Interactive online platforms have become an integral part of our daily lives. While user-generated content, free from traditional editorial constraints, has spurred vibrant online communications, improved business processes and expanded access to information, it has also raised complex questions regarding how to moderate harmful online content. As the volume of user-generated content continues to grow, it has become increasingly difficult for internet and social media companies to keep pace with the moderation needs of the information posted on their platforms. Content moderation measures supported by artificial intelligence (AI) have emerged as important tools to address this challenge.

Interactive online platforms have become an integral part of our daily lives. While user-generated content, free from traditional editorial constraints, has spurred vibrant online communications, improved business processes and expanded access to information, it has also raised complex questions regarding how to moderate harmful online content. As the volume of user-generated content continues to grow, it has become increasingly difficult for internet and social media companies to keep pace with the moderation needs of the information posted on their platforms. Content moderation measures supported by artificial intelligence (AI) have emerged as important tools to address this challenge.

Social Distancing, Social Media and Section 230: DOJ Calls On Internet Companies to Provide Safer Online Communities

In this time of social distancing, working from home and school closures, people and businesses are relying on the internet more than ever to engage with friends, family, clients, consumers and the public at large. Social media and content-sharing websites are providing individual communities and the entire nation with 24/7 accessibility to forums for public discourse, communication and the dissemination of news.

In this time of social distancing, working from home and school closures, people and businesses are relying on the internet more than ever to engage with friends, family, clients, consumers and the public at large. Social media and content-sharing websites are providing individual communities and the entire nation with 24/7 accessibility to forums for public discourse, communication and the dissemination of news.

The “Commander-in-Tweet” Returns: When a Social Media Account Creates a Public Forum, Critics Get to Stay

Two years ago, we wrote about a possible First Amendment challenge involving Donald Trump’s practice of blocking certain Twitter users from his @realDonaldTrump account. While it was unclear at the time of our post whether the Knight First Amendment Institute at Columbia University—an organization that uses strategic litigation to preserve the freedoms of speech and the press—would pursue further action, the Knight Institute filed a complaint a few days later in the U.S. District Court for the Southern District of New York against Trump, then-White House Press Secretary Sean Spicer and Daniel Scavino, the White House Director of Social Media and Assistant to the President. On July 9, 2019, the U.S. Court of Appeals for the Second Circuit issued a decision regarding this First Amendment issue.

Two years ago, we wrote about a possible First Amendment challenge involving Donald Trump’s practice of blocking certain Twitter users from his @realDonaldTrump account. While it was unclear at the time of our post whether the Knight First Amendment Institute at Columbia University—an organization that uses strategic litigation to preserve the freedoms of speech and the press—would pursue further action, the Knight Institute filed a complaint a few days later in the U.S. District Court for the Southern District of New York against Trump, then-White House Press Secretary Sean Spicer and Daniel Scavino, the White House Director of Social Media and Assistant to the President. On July 9, 2019, the U.S. Court of Appeals for the Second Circuit issued a decision regarding this First Amendment issue.

Critics, Trolls and the Disgruntled Few: Reputation Management in the Age of Social Media

Managing reputation is tough when every person with a social media account is a potential critic with global reach. Organizations must contend with the concern that one negative social media posting could destroy hard-earned goodwill built up through years of thoughtful investments and interactions. While social media platforms allow for organizations to efficiently engage with their target audience, they also allow users to easily become the targets of reputational attacks, such as unfounded complaints or smear campaigns. The potential for posts to go viral, the ability for posts to remain with seeming permanence on the internet and the capacity for social media users to mask their identities make it difficult for organizations to mitigate the consequences of an online reputational attack. The good news is that victims of such an attack are not without recourse. Generally, there are four options for responding to a negative social media post:

Managing reputation is tough when every person with a social media account is a potential critic with global reach. Organizations must contend with the concern that one negative social media posting could destroy hard-earned goodwill built up through years of thoughtful investments and interactions. While social media platforms allow for organizations to efficiently engage with their target audience, they also allow users to easily become the targets of reputational attacks, such as unfounded complaints or smear campaigns. The potential for posts to go viral, the ability for posts to remain with seeming permanence on the internet and the capacity for social media users to mask their identities make it difficult for organizations to mitigate the consequences of an online reputational attack. The good news is that victims of such an attack are not without recourse. Generally, there are four options for responding to a negative social media post:

- Do Not Respond to the Post

- Respond Directly to the Author

- Contact the Social Media Platform

- Litigation

If, when and how to respond are decisions that must be made by each organization individually after considering several factors, including those discussed below.

Keeping a Handle on Social Media Management by Employees

We often write about the benefits and pitfalls of social media usage. As companies and big businesses employ social media as an advertising mainstay, one pitfall we frequently encounter is the failure to properly manage a company’s social media handles. Like most pitfalls, these issues can be avoided. At a minimum, companies should have in place a robust and effective social media policy that is up to date on the current relevant laws, including privacy, advertising and employment laws. The policy should also include training, policing and reporting mechanisms that include clear guidance on what can and cannot come through on the company’s social media.

We often write about the benefits and pitfalls of social media usage. As companies and big businesses employ social media as an advertising mainstay, one pitfall we frequently encounter is the failure to properly manage a company’s social media handles. Like most pitfalls, these issues can be avoided. At a minimum, companies should have in place a robust and effective social media policy that is up to date on the current relevant laws, including privacy, advertising and employment laws. The policy should also include training, policing and reporting mechanisms that include clear guidance on what can and cannot come through on the company’s social media.

Hiring, Firing and HR Rewiring: Human Resources in the Age of Social Media

In the last decade, social media platforms have embedded themselves in the human resources function in companies worldwide. Companies like LinkedIn and Indeed have built empires based on clever deployment of social media to assist in the hiring and networking processes, even as human resource professionals use social media for more than finding the next great executive or software engineer to propel the business forward. Social media plays a key role in hiring, firing and all aspects of employee management and relations. Various studies and surveys have shown that up to 80% of all companies make some use of social media platforms in human resource decisions.

In the last decade, social media platforms have embedded themselves in the human resources function in companies worldwide. Companies like LinkedIn and Indeed have built empires based on clever deployment of social media to assist in the hiring and networking processes, even as human resource professionals use social media for more than finding the next great executive or software engineer to propel the business forward. Social media plays a key role in hiring, firing and all aspects of employee management and relations. Various studies and surveys have shown that up to 80% of all companies make some use of social media platforms in human resource decisions.

FOSTA and the Expansion of Corporate Liability for Social Media Companies

The March 21st passing of the Allow States and Victims to Fight Online Sex Trafficking Act (FOSTA) has dramatically altered the rules of engagement for social media companies. The new law amends and clarifies that the Communications Decency Act of 1996 was never intended to legally protect websites that unlawfully promote and facilitate prostitution and human trafficking or websites that should have known their platform was being utilized for such activities. The bill also creates new areas of civil liabilities for companies and necessitates more active monitoring of online accounts. In his recent Client Alert, colleague William M. Sullivan, Jr. examines the immediate and future ramifications of the bill.

Boeing Decision Forges New Balance Between NLRA Rights and Social Media Policies

Under Section 7 of the National Labor Relations Act (NLRA), all employees have a right to engage in protected concerted activity, even if they are not unionized. Such activities include those performed for the mutual aid or protection of all employees, such as discussing the terms and conditions of employment. An employer is prohibited by the Act from interfering with, restraining or coercing employees from exercising their Section 7 rights. In the past decade, there have been a number of important cases decided by the National Labor Relations Board (NLRB), the agency that protects the rights of employees to join together and improve wage and working conditions, that impact social media policies. In fact, many of the decisions have struck down social media policies as unenforceable under the NLRA. If any provision in a social media policy is vague or overbroad and can be read as restricting activities protected by Section 7, that provision will likely be found unlawful and unenforceable by the NLRB.

Under Section 7 of the National Labor Relations Act (NLRA), all employees have a right to engage in protected concerted activity, even if they are not unionized. Such activities include those performed for the mutual aid or protection of all employees, such as discussing the terms and conditions of employment. An employer is prohibited by the Act from interfering with, restraining or coercing employees from exercising their Section 7 rights. In the past decade, there have been a number of important cases decided by the National Labor Relations Board (NLRB), the agency that protects the rights of employees to join together and improve wage and working conditions, that impact social media policies. In fact, many of the decisions have struck down social media policies as unenforceable under the NLRA. If any provision in a social media policy is vague or overbroad and can be read as restricting activities protected by Section 7, that provision will likely be found unlawful and unenforceable by the NLRB.

Stumbling “Blocks”: When Is Social Media Moderation a First Amendment Violation?

As we previously discussed in our post “The ‘Commander-in-Tweet’ and the First Amendment,” the POTUS was criticized by the Knight First Amendment Institute for blocking certain Twitter users from his @realDonaldTrump account. According to the Knight First Amendment Institute, President Trump’s Twitter account functions like a town hall meeting where the public can voice their views about government actions and attendees cannot be excluded based on their views under the First Amendment. Therefore, according to the Knight Institute, President Trump is violating the First Amendment by blocking users based on the content of their tweets. Subsequently, on July 11, 2017, the Knight First Amendment filed suit against President Trump and his communications team on this basis.

As we previously discussed in our post “The ‘Commander-in-Tweet’ and the First Amendment,” the POTUS was criticized by the Knight First Amendment Institute for blocking certain Twitter users from his @realDonaldTrump account. According to the Knight First Amendment Institute, President Trump’s Twitter account functions like a town hall meeting where the public can voice their views about government actions and attendees cannot be excluded based on their views under the First Amendment. Therefore, according to the Knight Institute, President Trump is violating the First Amendment by blocking users based on the content of their tweets. Subsequently, on July 11, 2017, the Knight First Amendment filed suit against President Trump and his communications team on this basis.

Internet & Social Media Law Blog

Internet & Social Media Law Blog